Many experts debate, why to choose ELT approach over ETL? If this question is still on your table, then we at Scandic Fusion believe the answer is straightforward for the past 10 years. We have been strong advocates of ELT approach as that has proven to be the most efficient way to transform data, taking advantage of modern data warehousing platforms, especially since the column-based & clustered database technologies became affordable. This belief automatically challenges how objective the market research companies are, because they rather focus on BIG names with primary focus on ETL as a standalone supplement to your DWH/BI ecosystem.

With technologies rapidly evolving, many companies are reviewing the future direction of DWH/BI ecosystem. Paradigms do not change that often, but now is the right time to review what's next: is modern ELT the way to go, or what is your next-generation approach to handling dataflows within your new data warehouse ecosystem?

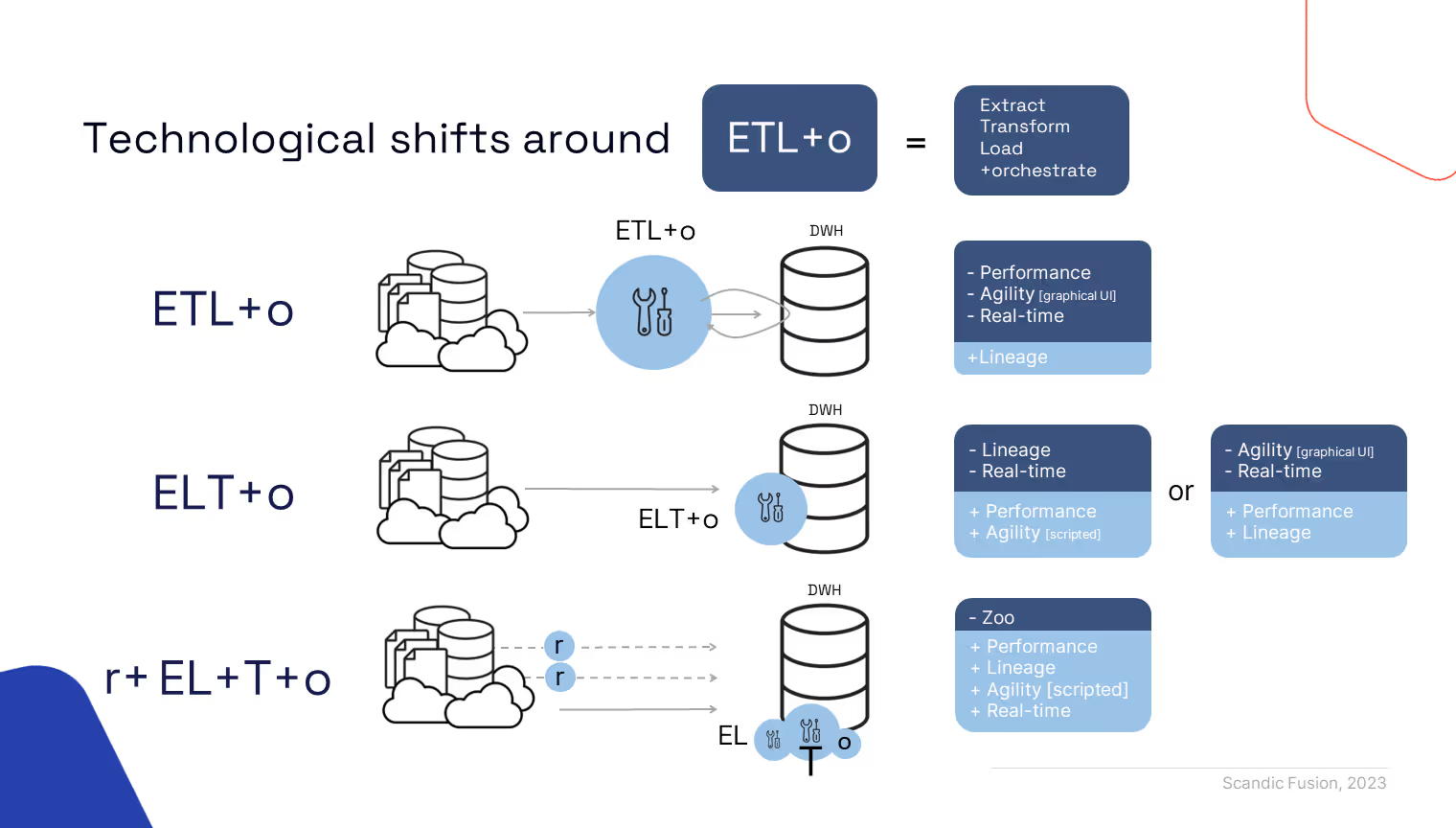

Long story short: ELT is not enough. It is more complex, because of more advanced business user needs (DEMAND) and a great variety of purpose-specific architectural options (SUPPLY). Thus, we tear apart and expand ELT to r+EL+T+o

r for real-time

Demand

Demand for freshness of valuable information has risen. If some years ago it was OK to have data as-of yesterday, financial period close, customer scoring, cross-selling &up-selling, customer 360, vendor 360, and other real-life business scenarios towards data analytics now require near-real time - information flowing in continuous manner for operational and strategic decisions.

But how to achieve [semi] real-time data acquisition?

Supply

Cloud-based sources, or SaaS, are on the rise. How do you acquire data - Pull or Push?

If pull, then you shall be able to call source system APIs. Many ELT tools find it hard to catch up with the latestdevelopments of standard software versions, not to speak of more specific ones,therefore you shall seek the tools, which are open to being extended withcustom code. Our strong recommendation is to seek the one, which enables Pythonscripting, because Python has become #1 programming language for on-prem andin-cloud component extensions.

If push, then we speak of data streaming using tools like KAFKA or Amazon SQS or Azure Event Hubs or alike… Make sure your scripting and data warehousing platform is ready to ingest semi-structured messages that are to be:

- stored first;

- structured later.

But if you still rely on good old relational data sources, or IaaS with full access to your source system, and you need to acquire real-time data, how do you do it? replication? virtualization?

Replication can be out-of-the-box, custom-coded and/or quite an expensive option.

E.g. Microsoft or SAP databases provide native CDC & replication options that are only meant to be operated in[semi] real-time manner.

E.g. community-built AirByte solution enables replication of PostgreSQL WAL files, but it falls short for handling large data volumes, due to which we faced the need to create a custom-coded solution ourselves.

E.g. Oracle offers expensive, yet high-performance replication from Oracle source to Oracle DWH (Golden Gate).

Virtualization is the new-old way of handling real-time needs towards operational/embedded (i.e. not so much transformed) data. This notion was old and died out because BI queries were too heavy to being handled well by operational data stores. Now, with SAP in-memory databases (or alike) underneath transactional ERP systems, it has become a valid option with high performance booster of engineered solution.

EL for Extract & Load

With "r for real-time" in place, you still need to have Extract & Load mechanism as part of your DWH ecosystem, for several reasons:

- Many replication/streaming components do not handle initial load or have insufficient performance;

- There might be data sources, which might not be real-time capable;

- Real-time solution costs might exceed the value you are getting from real-time data freshness!

So make sure to pick the right E&L component, which can extract data from any source (or it is capable of being extended with Python), supports bulk import into the selected DWH platform, and is an insignificant/small part of overall ELT costs. In other words, if we only need E&L, there is no need for investing into costly ELT component. Cloud vendors typically have their "cloud native" options that work just fine, e.g. Azure has Azure DataFactory (standalone) or Data Integration as part of Synapse, e.g. Google has Dataflow, etc. In on-prem scenario, we would rather pick open-source solution, e.g. Pentaho, than commercial alternatives which lack agility (e.g. how much development time you invest to build 100 staging dataflows).

T for transformations

Regardless of ELT tool you have picked, there has always been a dilemma whether you choose to:

- prioritize data flow lineage(declarative development of ELT) and sacrifice development agility;

- or do you favor speed of development (go SQL scripting), but business will lack visibility over the transformation flow of your data.

We observe (subjective, but fact!) that conservative decision makers choose lineage over agility. But what if you want both? Surprisingly, the new technological paradigm recognizes both needs and addresses them via specialized tool/approach for "T"!

E.g. DBT allows you to develop transformations in an SQL-scripted manner, having to add extra tags and follow some ground rules, especially, when you have to adapt to more complex scenarios(periodic facts, multi step facts, multi-source facts, etc.). It provides visual auto-generated documentation of the flow of data. It determines dependencies and auto-generates sequential or asynchronous execution of the overall transformation logic! DBT is available as an on-prem tool or cloud compute or cloud SaaS.

E.g. Google Cloud Platform has a similar tool to DBT called Dataform.

E.g. in SAP data warehouse cloud you write transformations as SQL scripts and get entity level lineage and flow dependency

One might ask, does it make sense to have T separated from EL (or r)? As long as we know that r+EL has replicated necessary source system data into staging and modern DWH database support shigh-performance data transformations (yes, we do know that!), it makes complete sense that you can have a dedicated T component with 100% push-down(all transformations executed on a database level).

o for orchestration

Having autonomous r+EL+T elements, you need a component, which orchestrates end-to-end data flow, hence "o" for orchestration!

Orchestration includes various scenarios: sequential/asynchronous/cyclic execution of data flow jobs, error handling and alerting, logging and monitoring and more.

E.g. in Azure we would take advantage of ADF or Synapse data integration to establish staging via EL, check that replication jobs are healthy via r, then call DBT jobs and react on "what if something goes wrong";

E.g. in GCP we would use cloud scheduler to call Pub/Sub, which calls cloud functions of r+EL+T tasks;

E.g. in SAP DWC orchestration is missing for a year already, but a promise (roadmap item) is on the table. Until then -use external tool that handles orchestration!

E.g. in on-prem scenario, we prefer using orchestration as part of ELT tool (e.g. Pentaho).

Summary

To respond to your business user needs and take advantage of modern technologies, you shall consider moving beyond ELT approach towards r+EL+T+o approach. This will introduce certain complexity, but with bright minds and considered choices, you will have excellent next-generation dataflows extending your DWH architecture!

.avif)